WWDC 2018 Diary Of An iOS Developer

Lou Franco 2018-06-14T13:45:32+02:00

2018-06-14T17:15:49+00:00

The traditional boundaries of summer in the US are Memorial and Labor Day, but iOS developers mark the summer by WWDC and the iPhone release. Even though the weather is cool and rainy this week in NYC, I’m in a summer mood and looking forward to the renewal that summer and WWDC promise.

It’s the morning of June 4th, and I’m reviewing my notes from WWDC 2017. Last year, I wrote that ARKit and Core ML were two of the big highlights. It was refreshing to see Apple focus on Machine Learning (ML), but there wasn’t much follow up in the rest of 2017. ARKit has spawned some interest, but no killer app (perhaps Pokemon Go, but that was popular before ARKit). Apple did not add to its initial library of Core ML downloadable models after the Core ML announcement.

Apple did release Turi Create and Lobe released a new interesting Core ML model maker last month. In the Apple/ML space, Swift creator, Chris Lattner, is taking a different approach with Swift for TensorFlow. But from the outside, Core ML seems mostly to have one obvious use: image classification. There doesn’t seem to be a lot of energy around exploring wildly different applications (even though we all know the ML is at the core of self-driving cars and whiz-bang demos like Google Duplex).

Another way Apple uses ML is in Siri, and earlier this year, I wrote about SiriKit and mentioned its perceived and real deficiencies when compared to Alexa and Google. One issue I explored was how Siri’s emphasis on pre-defined intents limits its range but hasn’t produced the promised accuracy that you might get from a bounded focus.

The introduction of HomePod last year only highlighted Siri’s woes, and a widely reported customer satisfaction survey showed 98% satisfaction with iPhone X but only a 20% satisfaction with Siri.

With all of this in the back of my mind, I personally was hoping to hear that Apple was going to make some major improvements in AR, ML, and Siri. Specifically, as an iOS developer, I wanted to see many more Core ML models, spanning more than just image classification and more help in making models. For Siri, I wanted to see many more intents and possibly some indication that intents would be a thing that would be added year round. It was a long-shot, but for AR, the next step is a device. But in the meantime, I hoped for increased spatial accuracy.

Finally, I love Xcode Playgrounds and iPad Playground books, but they need to to be a lot faster and stable, so I was hoping for something there too.

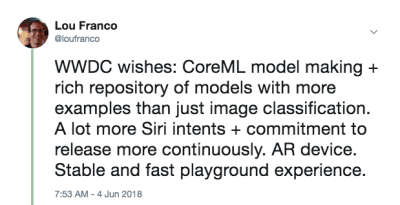

On the morning of WWDC, I tweeted this:

This wasn’t a prediction. It’s just a list of things I wanted to use in 2017 but found them underpowered or too hard for me to get started with, and was hoping Apple would make some improvements.

My plan for the day is to watch the keynote live, and then to watch the Platforms State of the Union. Those give a good overview of what to concentrate on for the rest of the week.

End Of Day 1: The Keynote And Platforms State Of The Union

The first day of WWDC is the keynote, which is meant for public consumption, and the Platforms State of the Union, which is an overview of the entire event, with some details for developers so that they can choose which sessions to attend.

Summary Of Notable, Non-iOS Developer Announcements

WWDC is not entirely about iOS development, so here’s a quick list of other things that happened to the other platforms or that are not very developer focused.

- To get it out of the way, there were no hardware announcements at all. No previews and no updates on the Mac Pro. We’ll have to wait for the iPhone and follow-on events in the fall.

- iOS 12 has a new Shortcuts app that seems to be the result of their acquisition of Workflow. It’s a way to “script” a series of steps via drag and drop. You can also assign the shortcut to a Siri keyword, which I’ll be covering below.

- iOS will automatically group notifications that are from the same app and let you act on them as a group.

- Animojis can now mimic you sticking out your tongue, and the new Memojis are highly configurable human faces that you can customize to look like yourself.

- FaceTime supports group video chat of up to 32 people.

- There is a new Screen Time app that gives you reports on your phone and app usage (to help you control yourself and be less distracted). It is also the basis of new parental controls.

- Apple TV got a small update: Support for Dolby Atmos and new screen savers taken from the International Space Station.

- The Watch got a competition mode for challenging others to workout-related challenges. It will also try to auto-detect the beginning and end of workouts in case you forget to start or stop them, and it now has Hiking and Yoga workouts.

- The Watch also has a new Walkie-Talkie mode that you can enable for trusted contacts.

- There are more audio SDKs that are native on the Watch, and Apple’s Podcasts app is now available. I expect third-party podcast apps will take advantage of these new SDKs as well.

- The Mac got the anchor spot of the event (which is hopefully an indication of renewed attention). It will be called macOS Mojave and features a dark mode.

- There are big updates to the Mac App Store, but notably, it now gets the same visual and content treatment the iOS App Store got last year. There are enough changes to the sandbox that Panic has decided to move Transit back there.

- Quick Look in the Finder now has some simple actions you can do to the file (e.g. rotating an image) and is customizable via Automator.

- Mojave will be the last version of macOS to support 32-bit apps and frameworks, which means the Quick Time Framework going away. It has seemingly been replaced with some video capture features in the OS itself.

- Apple announced that they are internally using a port of UIKit to make Mac apps and showed ports of Stocks, News, Home, and Voice Memos. The new framework will be released in 2019.

The iOS Developer Announcements I’m Most Excited About

iOS Developers got some good news as well. They hit on the four major areas I wanted to see improvement on:

- SiriKit now has custom intents, which opens up the possibilities quite a bit.

- Create ML is a new way to use Xcode Playgrounds to train models via transfer learning, which lets you augment existing models with your own training data.

- Xcode playgrounds now allow you to add code to the bottom of a page and run it without restarting. It’s hard to know if Playgrounds will be more stable until we get a real release in September, but this will make trying code much faster.

- ARKit 2 was announced along with a new Augmented Reality file format called USDZ, which is open and was developed with Adobe and Pixar. Adobe announced some tooling support already. It will allow users and developers to store and share AR assets and experiences. In addition, ARKit 2 allows multiple devices to be in the same AR environment and supports 3D object detection.

We didn’t get an AR device, but it sure feels like we’ll get one soon. And it needs to come from Apple (not third parties) because running ARKit requires an iOS device.

Setting Up Your Machine

Everything you need is available now in the developer portal. To use the code in the article, you need the Xcode 10 Beta. I would not recommend using iOS 12 Betas yet, but if you really want to, go to the portal on your device and download the iOS 12 Beta Configuration Profile.

The only major thing you need a device with the beta for is ARKit 2. Everything else should run well enough in Xcode 10’s simulator. As of the first beta, Siri Shortcut support in the simulator is limited, but there is enough there to think that that will be fixed in future releases.

End Of Day 2: Playing With Siri Custom Intents

Last year, I wrote how you needed to fit within one of Apple’s pre-defined intents in order to use SiriKit in your app. This mechanism was introduced in 2016 and added to in 2017 and even between WWDC events. But it was clear that Amazon’s approach of custom intents was superior for getting voice control into more diverse apps, and Apple added that to SiriKit last week.

To be clear, this is a first implementation, so it’s not as extensive as Alexa Skills just yet, but it opens up Siri’s possibilities quite a bit. As I discussed in the previous article, the main limitation of custom intents is that the developer needs to do all of the language translation. SiriKit gets around this a little by asking the user to provide the phrase that they’d like to use, but there is still more translation needed for custom intents than for predefined intents.

And they built in on the same foundation as the predefined intents, so everything I covered still applies. In fact, I will show you how to add a new custom intent to List-o-Mat, the app I wrote for the original SiriKit article.

(Free) Siri Shortcut Support If You Already Support Spotlight

If you use NSUserActivity to indicate things in your app that your user can initiate via handoff or search, then it’s trivial to make them available to Siri as well.

All you need to do is add the following line to your activity object:

activity.isEligibleForPrediction = true

This will only work for Spotlight-enabled activities (where isEligibleForSearch is true).

Now, when users do this activity, it is considered donated for use in Siri. Siri will recommend very commonly done activities or users can find them in the Shortcuts app. In either case, the user will be able to assign their own spoken phrase in order to start it. Your support for starting the activity via Spotlight is sufficient to support it being started via a shortcut.

In List-o-Mat, we could make the individual lists available to Spotlight and Siri by constructing activity objects and assigning them to the ListViewController. Users could open them via Siri with their own phrase.

It’s redundant in our case because we had a pre-defined intent for opening a list, but most apps are not so lucky and now have this simple mechanism. So, if your app has activities that aren’t supported by Siri’s pre-defined intents (e.g. playing a podcast), you can just make them eligible for prediction and not worry about custom intents.

Configuring SiriKit To Use Custom Intents

If you do need to use a custom intent, then SiriKit needs to be added to your app, which requires a bit of configuration.

All of the steps for configuring SiriKit for custom intents are the same as for predefined intents, which is covered in detail in my SiriKit article here on Smashing. To summarize:

- You are adding an extension, so you need a new App ID, and provisioning profile and your app’s entitlements needs have Siri added.

- You probably need an App Group (it’s how the extension and app communicate).

- You’ll need an Intents Extension in your project

- There are Siri specific .plist keys and project entitlements you need to update.

All of the details can be found in my SiriKit article, so I’ll just cover what you need to support a custom intent in List-o-Mat.

Adding A Copy List Command To List-o-Mat

Custom intents are meant to be used only where there is no pre-defined intent, and Siri does actually offer a lot of list and task support in its Lists and Notes Siri Domain.

But, one way to use a list is as a template for a repeated routine or process. To do that we’ll want to copy an existing list and uncheck all of its items. The built-in List intents don’t support this action.

First, we need to add a way to do this manually. Here is a demo of this new behavior in List-o-Mat:

To get this behavior to be invokable by Siri, we’ll “donate an intent,” which means we’ll tell iOS every time you do this. Then, it will eventually learn that in the morning, you like to copy this list and offer it as a shortcut. Users can also look for donated intents and assign phrases manually.

Creating The Custom Intent

The next step is to create the custom intent in Xcode. There is a new file template, so:

- Choose File → New File and pick “SiriKit Intent Definition File”.

Choose to add an intent definition file (Large preview) - Name the file ListOMatCustomIntents.intentdefinition, and choose to put the file in both the App and Intent Extension targets. This will automatically generate classes into both targets that implement the intent protocols but have your custom behavior implemented.

- Open the Definition file.

- Use the + button on the bottom left to add an intent and name it “CopyList”.

- Set the Category to “Create” and fill in the title and subtitle to describe the intent:

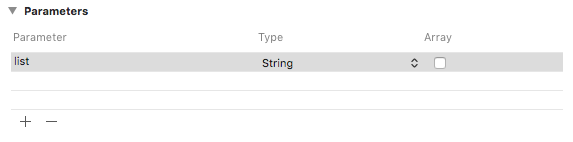

Add a category, title, and subtitle to the intent (Large preview) - Add a String parameter named “list”.

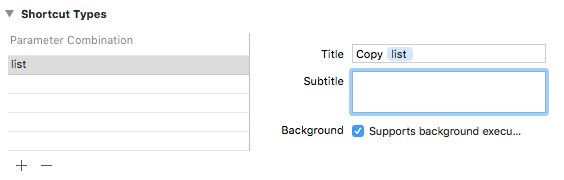

Add a String paramter named “list” (Large preview) - Add a shortcut type with the list parameter and give it a title named “Copy list”.

Add a shortcut type titled “Copy list” (Large preview)

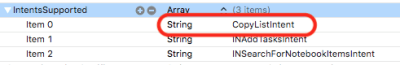

If you look in the Intent plist, you will see that this intent has already been configured for you:

Donating The Intent

When we do a user interaction in our app that we want Siri to know about, we donate it to Siri. Siri keeps track of contextual information, like the time, day of the week, and even location, and if it notices a pattern, it will offer the shortcut to the user.

When we tap the Copy menu, add this code:

@available(iOS 12, *)

func donateCopyListInteraction(listName: String) {

let copyListInteraction = CopyListIntent()

copyListInteraction.list = listName

copyListInteraction.suggestedInvocationPhrase = "Copy (listName)"

let interaction = INInteraction(intent: copyListInteraction, response: nil)

interaction.donate { [weak self] (error) in

self?.show(error: error)

}

}

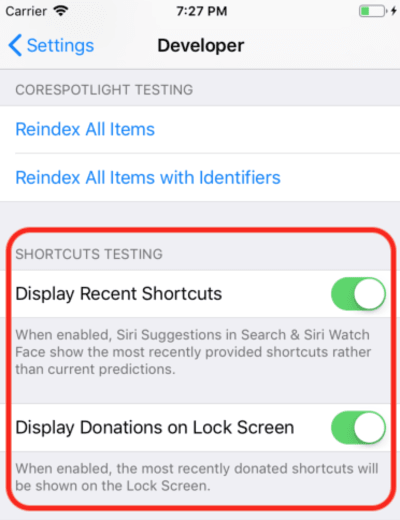

This simply creates an object of the auto-generated CopyListIntent class and donates it to Siri. Normally, iOS would collect this info and wait for the appropriate time to show it, but for development, you can open the Settings app, go to the Developer section, and turn on Siri Shortcut debugging settings.

Note: As of this writing, with the first betas, this debug setting only works on devices, and not the simulator. Since the setting is there, I expect it to start working in further betas.

When you do this, your donated shortcut shows up on in Siri Suggestions in Spotlight.

Tapping that will call into your Intent extension because we are allowing background execution. We’ll add support for that next.

Handling The Custom Intent

We already have an Intents extension, and since the custom intent definitions file is already added to the file, it also has the generated intent classes. All we need to do is add a handler.

The first step is to add a new class, named CopyListIntentHandler to the extension. Here is its code:

import Intents

@available(iOS 12, *)

class CopyListIntentHandler: ListOMatIntentsHandler, CopyListIntentHandling {

func handle(intent: CopyListIntent, completion: @escaping (CopyListIntentResponse) -> Void) {

// Find the list

var lists = loadLists()

guard

let listName = intent.list?.lowercased(),

let listIndex = lists.index(where: { $0.name.lowercased() == listName})

else {

completion(CopyListIntentResponse(code: .failure, userActivity: nil))

return

}

// Copy the list to the top, and respond with success

copyList(from: &lists, atIndex: listIndex, toIndex: 0)

save(lists: lists)

let response = CopyListIntentResponse(code: .success, userActivity: nil)

completion(response)

}

}

Custom intents only have a confirm and handle phase (custom resolution of parameters is not supported). Since the default confirm() returns success, we’ll just implement handle(), which has to look up the list, copy it, and let Siri know if it was successful or not.

You also need to dispatch to this class from the registered intent handler by adding this code:

if #available(iOS 12, *) {

if intent is CopyListIntent {

return CopyListIntentHandler()

}

}

Now you can actually tap that Siri suggestion and it will bring this up:

And tapping the Create button will copy the list. The button says “Create” because of the category we chose in the intent definition file.

Phew, that was a lot. These new Siri shortcuts are the main feature in iOS 12 that has a new large developer surface area to explore. Also, since I happened to have a good (and documented) Siri example to work with, it was reasonable to try to add the new features to it this week.

You can see the update List-o-Mat in GitHub. Until Xcode 10 and iOS 12 are released it’s in its own branch.

The next few days, I’ll mostly be looking at Apple sample code or making much smaller projects.

End Of Day 3: Xcode Playgrounds

The entire previous day was spent in Xcode 10 beta, which didn’t crash once and seemed ready for development. So now I wanted to explore the new Playgrounds features.

The main thing I wanted from playgrounds is to make them more stable and much faster. To make them faster, Apple added a big feature — a REPL mode.

Before Xcode 10, when you were in a Playground that had auto-run on (which is the default), every line of code actually rebuilt the entire file and ran it from the beginning. If you had built up any state, it was lost. But, the real issue was that this was way too slow for iterative development. When I use Playgrounds, I set them to manually run, but even that is slow.

In Xcode 10, manual running is more the norm, but after you run it, you can add more lines at the bottom of the page and continue execution. This means you can explore data and draw views iteratively without constantly rebuilding and starting from scratch.

To get started, I created an iOS playground (File → New → Playground) with the Single View template.

Turn on manual running by bringing down the menu below the Play button (the triangle in the bottom left corner). This puts a vertical strip to the left that shows the current position of the Play head (kind of like breakpoints).

You can tap any line and then tap the play button to its left. This will run the Playground to this point. Then you can go further by tapping lines lower in the Playground. Critically, you can add more lines to the bottom and type Shift + Enter after each one to move the Play head to that point.

Here’s a GIF of me changing the label of a view without needing to restart the Playground. After each line I type, I am pressing Shift + Enter.

Playgrounds also support custom rendering of your types now, and Apple is making a big push for every Swift framework to include a Playground to document it.

End Of Day 4: Create ML

Last year, Apple made a big leap for programming Machine Learning for their devices. There was a new ML model file format and direct support for it in Xcode.

The potential was that there would be a large library of these model files, that there would be tools that would create them, and that many more app developers would be able to incorporate ML into their projects without having to know how to create models.

This hasn’t fully materialized. Apple didn’t add to the repository of models after WWDC, and although there are third-party repositories, they mostly have models that are variations on the image classification demos. ML is used for a lot more than image classification, but a broad selection of examples did not appear.

So, it became clear that any real app would need its developers to train new models. Apple released Turi Create for this purpose, but its far from simple.

At WWDC 2018, Apple did a few things to Core ML:

- They expanded the Natural Language Processing (NLP) part of Core ML which gives us a new major domain of examples.

- They added the concept of Transfer Learning to Core ML, which allows you to add training data to an existing model. This means you can take models from the library, and customize them to your own data (for example, have them recognize new objects in images you provide).

- They released Create ML which is implemented inside of Xcode Playgrounds and lets you drag and drop data for training and generate model extensions (using Transfer Learning).

This is another nice step in democratizing ML. There’s not much code to write here. To extend an image classifier, you just need to gather and label images. Once you have them, you just drag them into Create ML. You can see the demo in this Create ML WWDC video.

End Of The Week: Play With The New AR Demos

ARKit was another big addition last year and it seems even more clear that an AR device is coming.

My ARKit code from last year’s article is still a good way to get started. Most of the new features are about making AR more accurate and faster.

After that, if you have installed a beta, you will definitely want to download the new SwiftShot ARKit demo app. This app takes advantage of the new features of ARKit, especially the multi-player experience. Two or more devices on the same network and in the same place, can communicate with each other and see the same AR experience.

Of course, to play this, you need two or more devices you are willing to put on the iOS 12 beta. I’m waiting for the public beta to do this because I only have one beta-safe device.

The easier AR app to play with is the new Measure app, which allows you to measure the length of real objects you see in AR camera view. There have been third-party apps that do this, but Apple’s is polished and pre-installed with iOS 12.

Links To WWDC Videos And Sample Code

So, I’m looking forward to doing more with Xcode 10 and iOS 12 this summer while we wait for the new phones and whatever devices Apple might release at the end of the summer. In the meantime, iOS developers can enjoy the sun, track our hikes with our new beta Watch OS, and watch these WWDC videos when we get a chance.

You can stream WWDC 2018 videos from the Apple developer portal. There is also this unofficial Mac App for viewing WWDC videos.

Here are the videos referenced in this article:

- WWDC 2018 Keynote

- WWDC 2018 Platforms State of the Union

- Introduction to Siri Shortcuts

- Getting the Most out of Playgrounds in Xcode

- Introducing Create ML, and if you want something more advanced, A Guide to Turi Create

To start playing with Xcode 10 and iOS 12:

- Download the betas (visit on a device to get the beta profile)

- List-o-Mat with Siri Shortcut updates

- Swift Shot (the multi-player ARKit 2 game)

(ra, il)

(ra, il)

Source: Smashingmagazine.com